Attention Residuals: How Kimi Rethinks Depth-Wise Information Flow in LLMs

Every modern LLM is built on residual connections. Since ResNets introduced them in 2015, the formula hasn’t changed: each layer simply adds its output to what came before. Nobody questioned whether this fixed, uniform accumulation was actually optimal, until the Kimi team at Moonshot AI did.

Their paper, Attention Residuals, makes a beautifully simple observation: the way information aggregates across depth in a neural network suffers from the exact same bottleneck that RNNs had across time, and it can be fixed with the exact same tool: attention.

The Problem: Residual Connections Are Blind

Standard residual connections update the hidden state at layer $l$ as:

$$h_l = h_{l-1} + f_{l-1}(h_{l-1})$$

Unrolling this recurrence, the input to any layer is just a uniformly-weighted sum of all prior layer outputs:

$$h_l = h_1 + \sum_{i=1}^{l-1} f_i(h_i)$$

Every layer contribution gets the same weight of 1. There is no mechanism for a deeper layer to say “I need more of layer 3’s output and less of layer 7’s.” This causes three concrete problems:

- No selective access: attention layers and MLP layers receive the same aggregated state, despite having different information needs.

- Irreversible loss: once a layer’s signal is diluted by subsequent additions, it cannot be recovered.

- PreNorm magnitude explosion: with PreNorm (the dominant normalization scheme), hidden-state magnitudes grow as $O(L)$ with depth, progressively diluting each layer’s relative contribution.

This last point is particularly insidious. As the Kimi team shows, later layers are forced to learn increasingly large outputs just to remain influential over the ballooning residual stream, which destabilizes training dynamics.

The Key Insight: Time-Depth Duality

Here’s where the paper gets elegant. The authors observe a formal duality between depth-wise accumulation and sequential recurrence in RNNs:

- An RNN compresses all past tokens into a single hidden state over time.

- A residual network compresses all past layers into a single hidden state over depth.

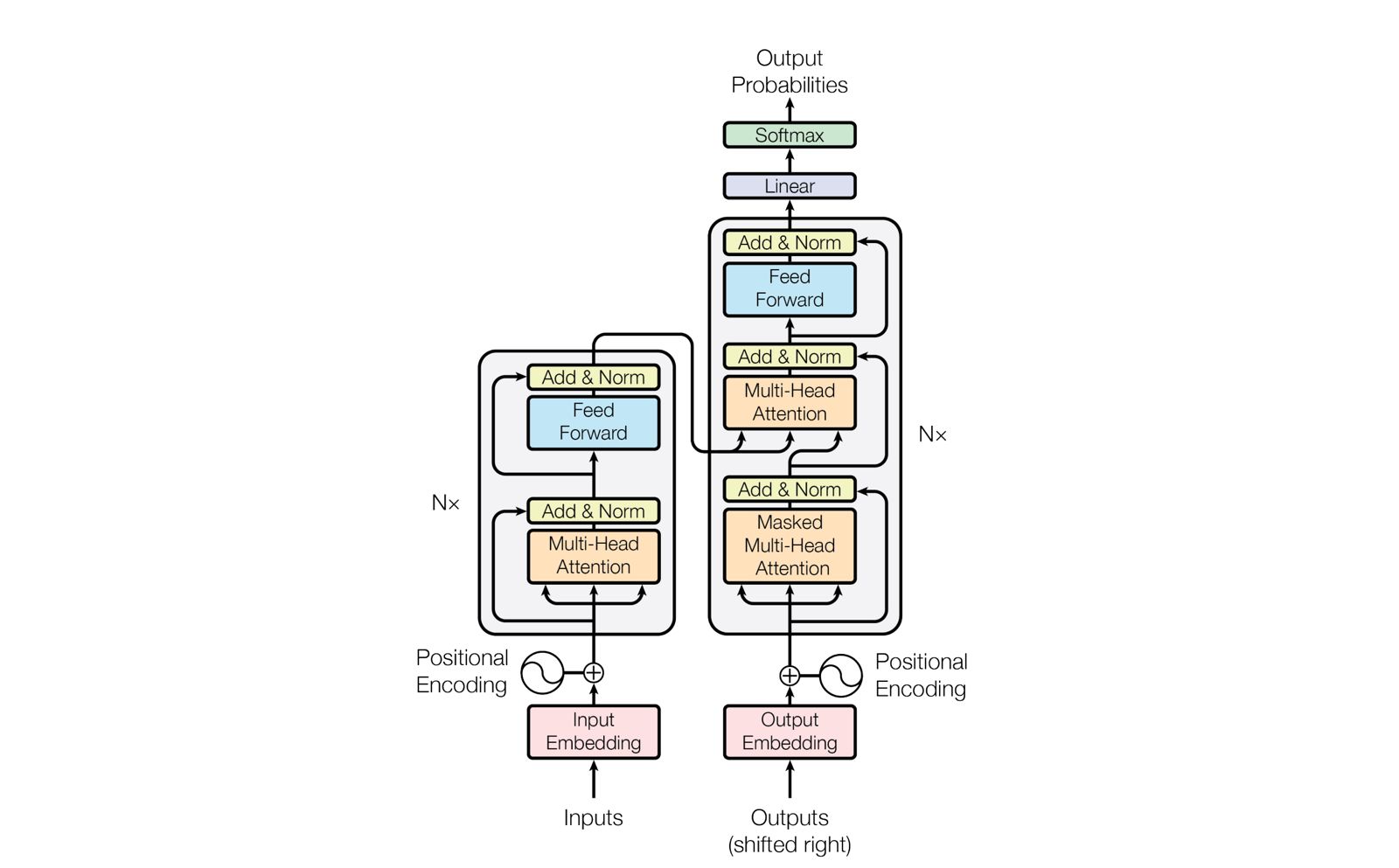

The Transformer revolution solved the RNN bottleneck by replacing recurrence with attention over the sequence dimension. The Kimi team proposes the same transition, but over the depth dimension.

Standard residual connections perform depth-wise linear attention. Attention Residuals generalize them to depth-wise softmax attention.

This framing is not just a nice analogy. It’s the core theoretical contribution. The paper provides a unified structured-matrix analysis (Section 6) showing that standard residuals, Highway Networks, and other recurrence-based variants all correspond to forms of depth-wise linear attention. AttnRes completes the linear-to-softmax transition that proved transformative over sequences.

The Method: Attention Residuals (AttnRes)

Instead of uniform accumulation, each layer computes softmax attention over all preceding layer outputs:

$$h_l = \sum_{i=0}^{l-1} \alpha_{i \to l} \cdot v_i$$

where the attention weights are:

$$\alpha_{i \to l} = \frac{\phi(q_l, k_i)}{\sum_{j=0}^{l-1} \phi(q_l, k_j)}$$

with $\phi(q, k) = \exp\left(q^\top \text{RMSNorm}(k)\right)$, and:

$$q_l = w_l, \quad k_i = v_i = \begin{cases} h_1 & i = 0 \ f_i(h_i) & 1 \leq i \leq l-1 \end{cases}$$

The beauty of this design lies in its simplicity:

- One learned vector per layer: the pseudo-query $w_l \in \mathbb{R}^d$ is the only new parameter. No complex routing, no extra heads.

- RMSNorm on keys: prevents layers with large-magnitude outputs from dominating the attention weights, directly addressing the PreNorm explosion problem.

- Content-dependent selection: unlike fixed coefficients, the attention weights adapt based on what each layer actually produced for the current input.

- Zero initialization: all pseudo-queries are initialized to zero, so initial attention weights are uniform. AttnRes starts as standard residuals and learns to deviate.

The parameter overhead is negligible: one $d$-dimensional vector and one RMSNorm per layer, a tiny fraction of the model’s total parameters.

Scaling It Up: Block AttnRes

Full AttnRes attends over all $L$ layer outputs, requiring $O(Ld)$ memory and $O(L^2d)$ compute over depth. While $L$ is small compared to sequence length, large-scale training with pipeline parallelism makes this impractical because every layer’s output must be communicated across pipeline stages.

The solution is Block Attention Residuals. Layers are partitioned into $N$ blocks of $S = L/N$ layers each. Within each block, standard residuals accumulate outputs into a single block representation:

$$b_n = \sum_{j \in B_n} f_j(h_j)$$

Across blocks, full softmax attention is applied over only the $N$ block-level representations. This reduces memory and communication from $O(Ld)$ to $O(Nd)$.

The paper shows that $N \approx 8$ blocks recover most of the benefit of full AttnRes across all model scales, a remarkably small number. At the largest scale tested, Block AttnRes (with $N = 8$) achieves a validation loss within 0.001 of Full AttnRes.

Infrastructure: Making It Actually Work

A clean idea is worthless if it can’t scale. The Kimi team put serious engineering effort into making Block AttnRes practical:

Cross-stage caching eliminates redundant communication under pipeline parallelism. Instead of transmitting the full block history at every stage boundary, each rank caches previously received blocks. Only incremental blocks are transmitted, reducing peak per-transition cost from $O(C)$ to $O(P)$, a $V\times$ improvement.

Two-phase inference exploits the fact that pseudo-queries are decoupled from the forward computation:

- Phase 1: batch all $S$ inter-block queries into a single matrix multiplication (amortizing memory access).

- Phase 2: compute intra-block attention sequentially, merging with Phase 1 via online softmax.

The result: less than 2% inference latency overhead and less than 4% training overhead under pipeline parallelism. The per-layer memory I/O is just $(N/S + 5)d$, compared to $34d$ for DeepSeek’s mHC with $m = 4$ streams.

Results: Consistent Gains Everywhere

Scaling Laws

Across five model sizes, both Full and Block AttnRes consistently outperform the baseline. The fitted scaling curves tell the story:

| Variant | Scaling Law |

|---|---|

| Baseline | $L = 1.891 \times C^{-0.057}$ |

| Block AttnRes | $L = 1.870 \times C^{-0.058}$ |

| Full AttnRes | $L = 1.865 \times C^{-0.057}$ |

Block AttnRes reaches the baseline’s loss using 1.25x less compute: free performance from better information routing.

Downstream Benchmarks (48B MoE, 1.4T tokens)

Integrated into the Kimi Linear architecture (48B total / 3B activated parameters), Block AttnRes improves on every evaluated benchmark:

| Benchmark | Baseline | AttnRes | Delta |

|---|---|---|---|

| GPQA-Diamond | 36.9 | 44.4 | +7.5 |

| Math | 53.5 | 57.1 | +3.6 |

| HumanEval | 59.1 | 62.2 | +3.1 |

| C-Eval | 79.6 | 82.5 | +2.9 |

| MMLU | 73.5 | 74.6 | +1.1 |

| TriviaQA | 69.9 | 71.8 | +1.9 |

| BBH | 76.3 | 78.0 | +1.7 |

| GSM8K | 81.7 | 82.4 | +0.7 |

The largest gains are on multi-step reasoning (GPQA-Diamond, Math) and code generation (HumanEval), tasks where selective retrieval from earlier layers matters most.

Training Dynamics

The training analysis reveals why AttnRes helps:

- Output magnitudes stay bounded across depth, unlike the baseline’s monotonic growth.

- Gradient distribution becomes uniform: the baseline concentrates gradients disproportionately in early layers, while AttnRes spreads them evenly.

- The PreNorm dilution problem is structurally solved: selective aggregation at block boundaries resets the accumulation, creating a bounded periodic pattern.

How It Compares to DeepSeek’s mHC

DeepSeek’s concurrent work, Manifold-Constrained Hyper-Connections (mHC), tackles the same problem from a different angle. Where Hyper-Connections (HC) expand the residual stream width to $n \times C$ dimensions with learned mixing matrices, mHC constrains these matrices onto a manifold to restore the identity mapping property and fix HC’s training instability.

The comparison is illuminating:

| AttnRes (Kimi) | mHC (DeepSeek) | |

|---|---|---|

| Approach | Softmax attention over depth | Manifold-constrained stream mixing |

| New params per layer | 1 vector ($d$-dim) + RMSNorm | Multiple $n \times n$ matrices |

| Memory I/O per layer | $5.5d$ (Block, typical) | $34d$ ($m=4$ streams) |

| Scaling law performance | Comparable | Comparable |

| Conceptual simplicity | High (one clear mechanism) | Moderate (manifold projection adds complexity) |

In the Kimi paper’s own scaling experiments (Table 2), Full AttnRes outperforms mHC at every model size except one, while Block AttnRes matches it at 6x lower memory I/O per layer. Both methods clearly beat the baseline, but AttnRes achieves this with a far simpler mechanism and lighter infrastructure footprint.

Why This Paper Matters

Residual connections have been untouched for a decade. Everyone optimized the layers between the residuals (attention mechanisms, MLP architectures, expert routing) while the residual connection itself remained a fixed identity shortcut. The Kimi team challenges this with an idea that is:

- Theoretically grounded: the time-depth duality isn’t a hand-wavy analogy but a formal equivalence backed by structured-matrix analysis.

- Simple to implement: one learned vector per layer, zero initialization, drop-in replacement.

- Practically efficient: less than 2% inference overhead with the two-phase strategy.

- Consistently effective: gains on every benchmark, every model size, with favorable scaling laws.

The most compelling aspect is how natural the idea feels once stated. Just as attention replaced RNN recurrence over sequences, Attention Residuals replace residual recurrence over depth. It’s the kind of insight that, in retrospect, feels obvious, and those tend to be the ones that stick.

Share with friends